1 · the problem

two pressures meet a quadratic wall

test-time scaling pushed token budgets up

- process supervision & long CoT: Let's Verify Step by Step (Lightman 2023)

- OpenAI o1 / o3 — paid compute scales answer quality

- DeepSeek-R1 (Jan 2025): pure-RL reasoning, >32K tokens per answer is normal

- agentic loops: tool calls, repos, deep research → 100K–1M effective context

vanilla attention is the bottleneck

- O(n²) compute, O(n) KV memory per layer

- at 1M tokens, KV cache and bandwidth dominate over weights

- cheap 1M-token inference — not just training — is the gating constraint

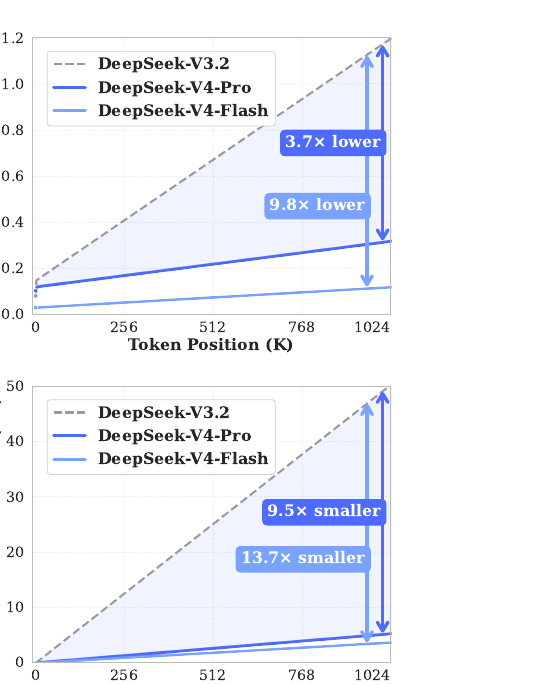

what V4 promises

m tokens into single KV entries

before selection. Savings stack multiplicatively with DSA's.

2 · the family — and the headline figure

two MoE models, one recipe

| V4-Flash | V4-Pro | (V3.2 ref) | |

|---|---|---|---|

| total params | 284B | 1.6T | 671B |

| activated / token | 13B | 49B | 37B |

| transformer layers | 43 | 61 | — |

| hidden d | 4096 | 7168 | — |

| routed experts | 256 (top-6) | 384 (top-6) | — |

| pre-train tokens | 32T | 33T | — |

| context | 1M | 1M | 128K |

Flash efficient reasoning at smaller budget Pro flagship; "Pro-Max" = max reasoning effort

First two layers use pure sliding-window attention. The first three MoE layers use Hash routing instead of learned routing.

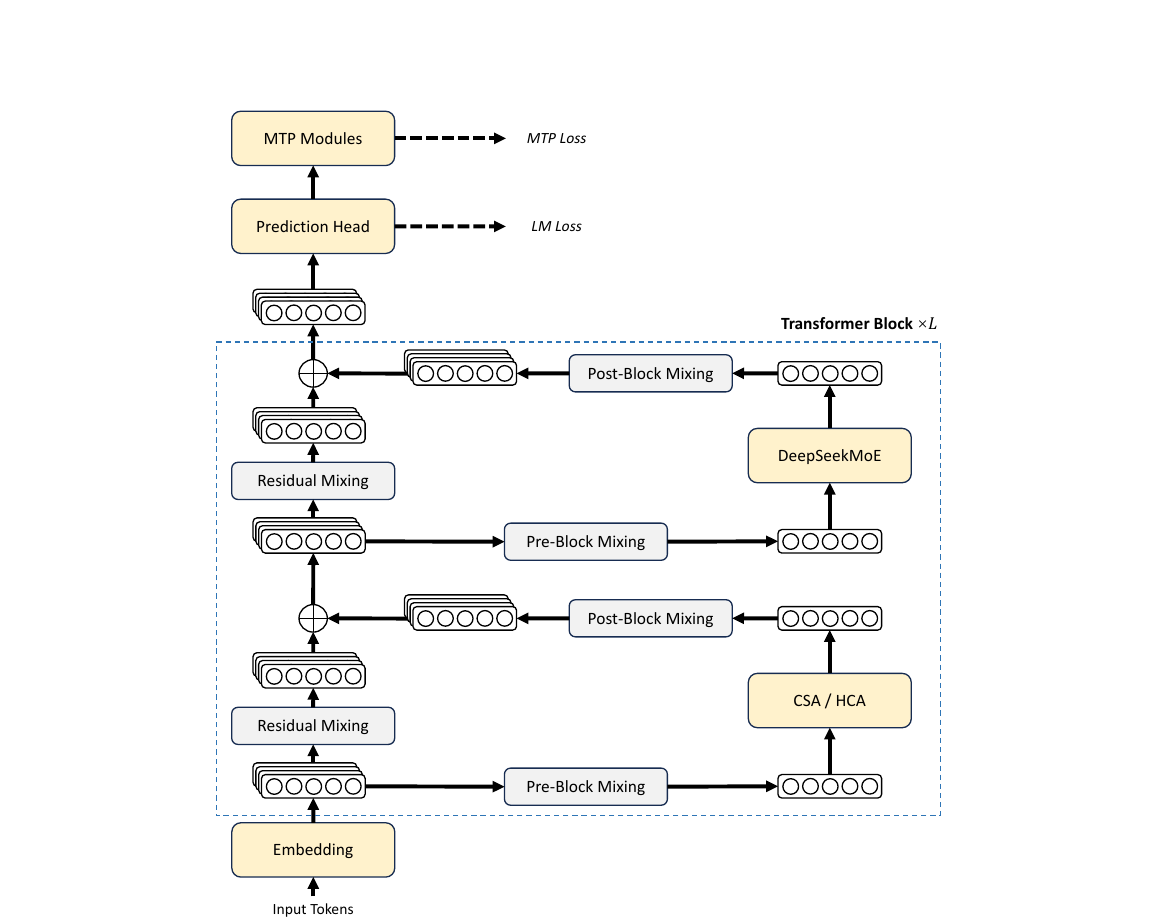

3 · architecture, big picture

V3 lineage with three deliberate upgrades

kept from V3

- DeepSeekMoE: shared + fine-grained routed experts (Dai+ 2024)

- Multi-Token Prediction (MTP) auxiliary loss

- auxiliary-loss-free load balancing + slight seq-wise loss

new in V4

- ① Hybrid attention: CSA + HCA interleaved (replaces V3.2's DSA-on-MLA)

- ② mHC: manifold-constrained hyper-connections (residual upgrade)

- ③ Muon optimizer (with AdamW for embeddings, head, RMSNorm, mHC statics)

small but notable

- routing affinity: Sigmoid → Sqrt(Softplus)

- removed cap on routing target nodes

- first 3 MoE layers use Hash routing

4 · background — attention & KV cache

the concepts every later slide builds on

standard self-attention

for each query token t, scores against all preceding keys:

- compute: n × n — quadratic in sequence length

- decode: cache K, V per layer → KV cache grows with n

- at long n, KV memory & bandwidth dominate, not weights

known knobs to attack the cost

- MQA / GQA / MLA: shrink KV across heads or via low-rank latent

- sliding window: each token sees only last

wtokens - sparse selection: pick top-k relevant keys per query (DSA)

- compression / pooling: pool blocks of

mtokens → 1 entry - linear / SSM: rewrite as kernel or recurrent state — constant memory but lossy recall

V4 = compression + sparse selection + sliding window + attention sink, in two complementary regimes.

4b · prior work map for long-context attention

what people tried before V4

| year | method | idea in one line | arxiv |

|---|---|---|---|

| 2019 | Sparse Transformers (Child+) | fixed strided/factorized patterns, O(n√n) | 1904.10509 |

| 2020 | Reformer | LSH bucketed attention, O(n log n) | 2001.04451 |

| 2020 | Longformer / BigBird | sliding window + global tokens, linear cost | 2004.05150 · 2007.14062 |

| 2020 | Linformer / Performer / Linear T. | low-rank or kernel approximation of softmax | 2006.04768 · 2009.14794 · 2006.16236 |

| 2023 | RWKV / Mamba / Mamba-2 | recurrent / selective SSMs, constant state | 2305.13048 · 2312.00752 · 2405.21060 |

| 2024 | Attention Sink / StreamingLLM | keep first tokens to absorb attention mass | 2309.17453 |

| 2024 | MLA (DeepSeek-V2) | low-rank latent KV with decoupled RoPE | 2405.04434 |

| 2025 | NSA — Native Sparse Attention | compress + select + window, end-to-end trainable | 2502.11089 |

| 2025 | MiniMax-01 (Lightning Attn) | linear attention at 456B-MoE, 4M extrapolate | 2501.08313 |

| 2025 | DSA (DeepSeek-V3.2) | lightning indexer + top-k on top of MLA | 2512.02556 |

| 2026 | CSA + HCA (V4) | two interleaved compression regimes + indexer in FP4 | (this paper) |

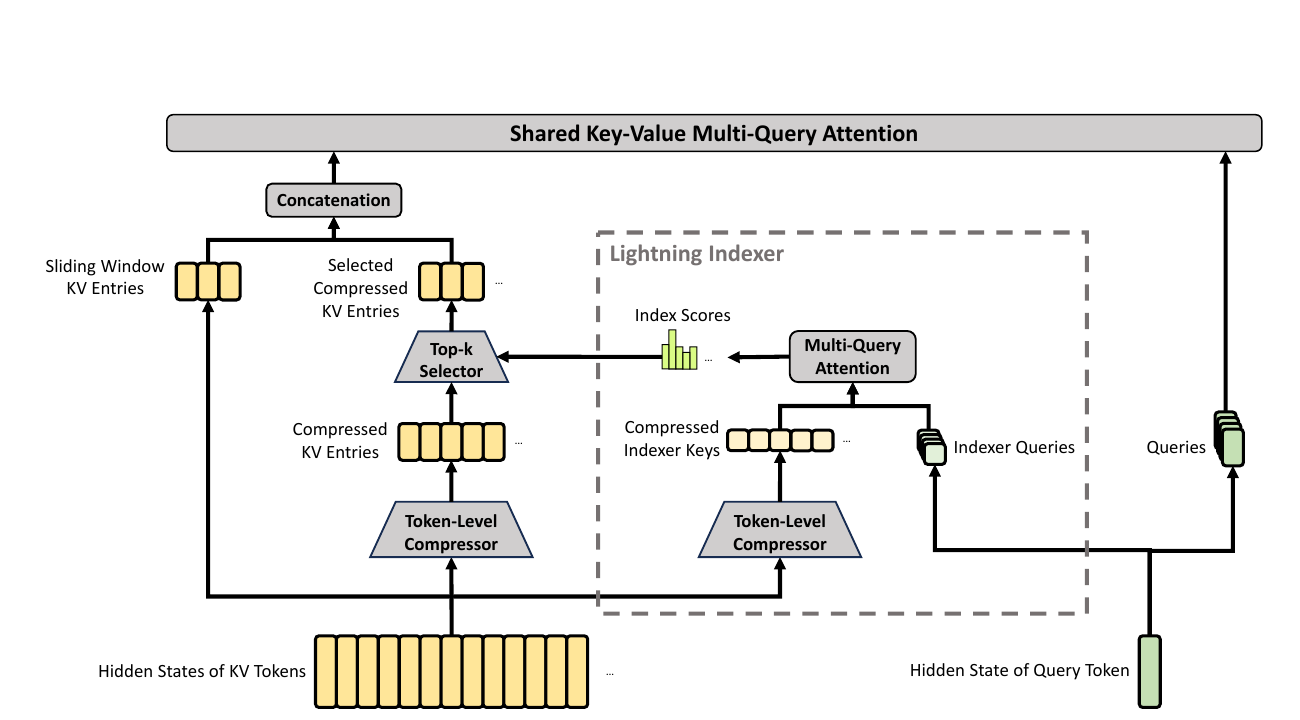

5 · the headline idea — hybrid attention

two compression regimes, interleaved across layers

CSA — Compressed Sparse Attention

- compress every m KV tokens → 1 entry m=4

- then a lightning indexer picks top-k compressed entries

- + small sliding window for local detail

- preserves fidelity; sparsity is the speedup

HCA — Heavily Compressed Attention

- compress every m' tokens → 1 entry m'=128

- dense attention over compressed sequence (no top-k)

- + small sliding window

- extreme cache reduction; relies on heavy summarization

what is genuinely new vs prior sparse attention

- two interleaved compression regimes: NSA had one compression branch alongside select+window; CSA stacks two compressors at different block sizes

- lightning indexer in FP4: V3.2 introduced the indexer; V4 pushes it to FP4 — index scores are smooth ranking signals, FP4's coarse grid is harmless

- overlapping windows on the second compressed stream (Cᵇ) — prior work uses disjoint blocks

- RoPE applied at position −i on outputs recovers relative position after compression — not seen in prior literature

- attention sink inside compressed-block attention (StreamingLLM applied it to raw tokens only)

5a · CSA in detail

compress, then sparsely select

step 1 — token-level compressor

- two KV streams Cᵃ, Cᵇ + softmax weights Zᵃ, Zᵇ

- each compressed entry pools 2m raw entries (overlapping windows on Cᵇ)

- net: sequence length reduced to n/m

step 2 — lightning indexer

- cheap MQA-style scorer in FP4

- index score It,s via low-rank query ↔ compressed key

- retain top-k (Flash: 512, Pro: 1024)

step 3 — core attention

- shared-KV MQA over selected blocks + sliding window

- grouped output projection keeps the projection matrix tractable

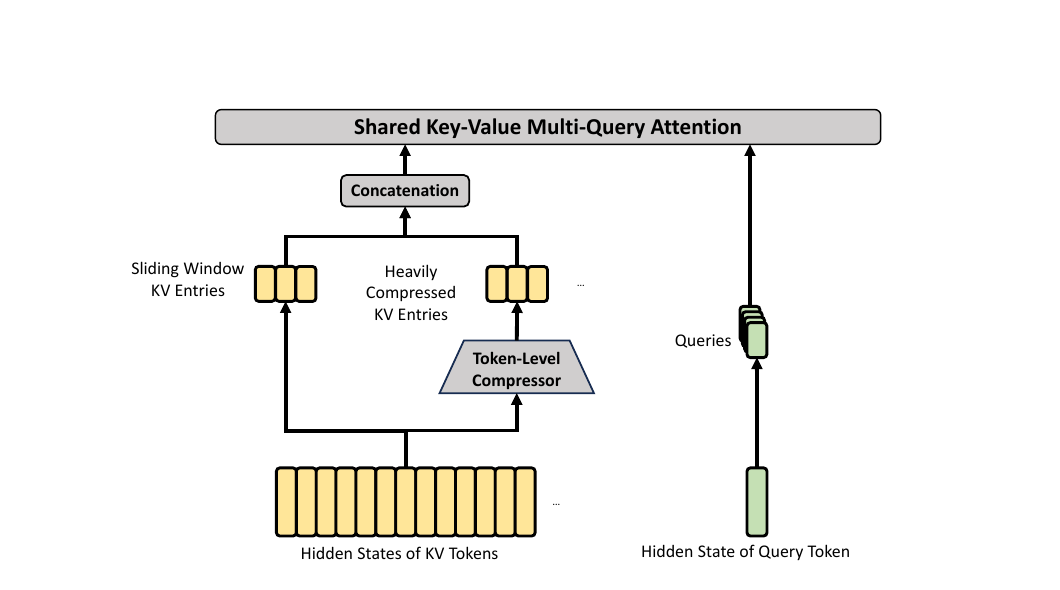

5b · HCA + the small-but-important bits

and where the 2% KV figure comes from

HCA flow

- single compressor, no two-stream overlap

- m' = 128 → 128× cache shrink on the compressed branch

- dense MQA over compressed entries + sliding window

- same shared-KV + grouped output projection as CSA

shared tricks for both

- RMSNorm on Q & KV → no exploding logits, no QK-Clip needed

- partial RoPE on last 64 dims; trick of adding RoPE at

−ion outputs to recover relative position after compression - extra sliding-window branch for local fidelity

- attention sink logit added to denominator — heads can attend to ~0

6 · residual upgrade — mHC

manifold-constrained hyper-connections

recap: hyper-connections (Zhu+ ICLR 2025)

- generalize residual stream Rd → Rnhc×d

- three small linear maps per layer: A (input), B (residual), C (output)

- update:

Xl+1 = B·Xl + C·𝓕l(A·Xl) - breaks the gradient-vanishing / collapse seesaw; subsumes DenseNet

- matches baseline LM loss with ~½ the tokens — but unconstrained B drifts past ‖·‖2=1, breaking trillion-param stacks

mHC fix (Xie+ 2026)

- constrain B to doubly stochastic matrices (Birkhoff polytope)

- ‖B‖2 ≤ 1 → residual map is non-expansive

- set is closed under multiplication → arbitrarily deep stacks stay stable

- A, C bounded non-negative via sigmoid → no signal cancellation

how they enforce it

- A = σ(Ã), C = 2σ(C̃)

- project B̃ via Sinkhorn-Knopp (1964/67) — same algo as entropic OT (Cuturi 2013)

- M(0) = exp(B̃)

- iterate row + col normalize (tmax=20)

siblings in Lipschitz-control land

- spectral norm reg (Miyato+ 2018)

- weight orthogonalization

- ReZero (Bachlechner+ 2020)

7 · optimizer — Muon

orthogonalized updates for hidden weights, at trillion scale

recipe

Gt = ∇L(Wt-1)

Mt = μ Mt-1 + Gt

O't = HybridNewtonSchulz(μMt+Gt) # ≈ U VT from SVD

Ot = O't · √max(n,m) · γ

Wt = Wt-1(1 − ηλ) − η Ot

hybrid Newton-Schulz

- 10 iterations, 2 stages:

- fast (×8): (3.4445, −4.7750, 2.0315) — push σ toward 1 from anywhere

- stabilize (×2): (2, −1.5, 0.5) — lock σ ≈ 1

- approximates Q from SVD without computing one

why this is exciting

- orthogonalization = steepest descent in spectral norm (vs Adam's element-wise rescaling) — sharper geometry for matrix-valued params

- theoretical lineage: Shampoo, Bernstein & Newhouse Modular Duality (2024) — Muon is the lightweight, preconditioner-free realization

- first credible AdamW challenger to win at frontier scale:

- Liu+ 2025 (Moonlight, 16B-active) — ~2× token efficiency vs AdamW

- V4 (1.6T total) — largest known Muon deployment to date

- RMSNorm on Q/KV removed need for QK-Clip stabilization

training-stability extras

- anticipatory routing — route with previous-step params; auto-engages around loss spikes

- SwiGLU clamping — linear branch ∈ [−10, 10], gate ≤ 10

8 · infrastructure

what makes 1.6T × 1M tractable

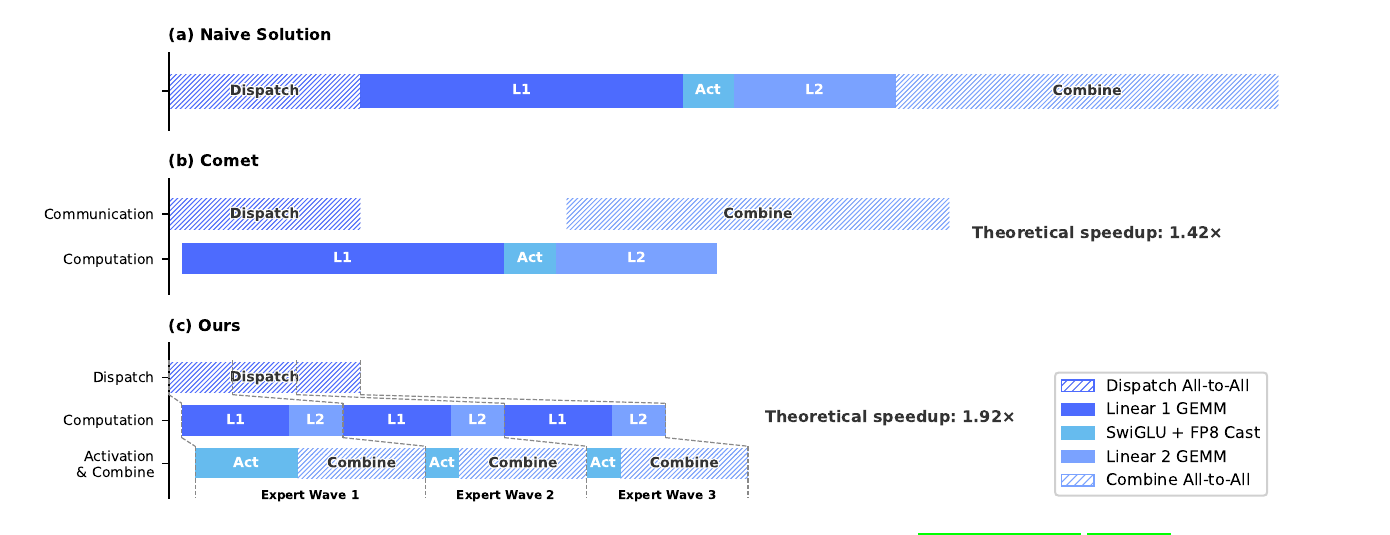

MoE expert parallelism

- fuse dispatch + linear-1/2 + combine into one pipelined kernel

- split experts into waves; comm of next wave hides under compute of current

- theoretical 1.92× speedup vs naive — vs Comet's 1.42×

- lineage: GShard, Switch, TUTEL, FasterMoE, COMET, DeepEP

kernels & precision

- TileLang — tiled DSL, 1.36–1.70× over Triton/FlashAttn-3

- batch-invariant deterministic kernel libs → bitwise reproducible train↔infer

- FP4 (MXFP4) QAT for MoE expert weights and indexer QK path

training framework

- tensor-level activation checkpointing (extended autograd)

- hybrid ZeRO tailored to Muon

- fused / recompute path for cheap mHC

- two-stage contextual parallelism for compressed attention at 1M

why each is exciting

- EP overlap: 1.92× suggests freeing SMs from comm duty entirely (hook-based, not just better tiles)

- FP4 surgically: index scores are smooth ranking signals → FP4's coarse grid is harmless where it would wreck attention logits

- batch-invariant kernels: enables on-policy RL without importance-sampling correction, exact bisection of loss spikes, reproducible evals

- 3FS: open-sourced 7.3 TB/s aggregate-read filesystem underpins teacher-weight streaming and DSec sandboxes

9 · pre-training

data, schedule, stability

data (32–33 T tokens)

- builds on V3 corpus; long-document curation emphasized

- filters batched auto-generated / templated web content (Zhu+ 2024)

- more multilingual (long-tail cultures), more scientific/tech

- agentic data injected at mid-training for code

- vocab 128K; FIM + token-splitting from V3; sample-level attention masking

schedule

- batch warmup → 75.5M (Flash) / 94.4M (Pro) tokens

- seq length: 4K → 16K → 64K → 1M

- attention sparsity introduced only at 64K, after dense warmup

- indexer warm-up before turning on top-k selection

- cosine decay near the end; MTP loss 0.3 → 0.1 at LR decay

stability lessons

- spikes correlate with MoE-layer outliers; routing reinforces them

- anticipatory routing + SwiGLU clamping kill spikes with negligible overhead

- mHC + RMSNorm-on-QK + Muon together remove the need for QK-Clip used in Liu+ 2025

10 · base-model results

V4-Flash-Base ≥ V3.2-Base on most benchmarks at 13B activated

| benchmark | V3.2-Base (37B) | V4-Flash-Base (13B) | V4-Pro-Base (49B) |

|---|---|---|---|

| MMLU (5-shot) | 87.8 | 88.7 | 90.1 |

| MMLU-Pro | 65.5 | 68.3 | 73.5 |

| SimpleQA-verified | 28.3 | 30.1 | 55.2 |

| SuperGPQA | 45.0 | 46.5 | 53.9 |

| FACTS-Parametric | 27.1 | 33.9 | 62.6 |

| HumanEval | 62.8 | 69.5 | 76.8 |

| GSM8K | 91.1 | 90.8 | 92.6 |

| MATH | 60.5 | 57.4 | 64.5 |

| LongBench-V2 | 40.2 | 44.7 | 51.5 |

Flash matches or beats V3.2 with ~1/3 the activated params; Pro raises every category, especially knowledge & long context.

11 · post-training pipeline

specialists, then merge by on-policy distillation

stage 1 — specialist training

- per domain (math, code, agent, instruction-follow): SFT → RL with GRPO (Shao+ 2024)

- three reasoning effort modes trained with different length penalties: non-think think-high think-max

- Generative Reward Model (GRM): actor itself plays judge for hard-to-verify tasks — direct application of GenRM (Zhang+ 2024)

stage 2 — On-Policy Distillation (OPD)

- cited: Lu & Thinking Machines Lab, Oct 2025

- replaces V3.2's mixed-RL stage entirely

- student samples its own trajectories; teachers (10+) provide per-token KL feedback

- uses full-vocabulary reverse-KL (not token-level estimate) → lower variance

- algorithmic ancestor: MiniLLM (Gu+ 2023, on-policy reverse-KL distillation)

infra for OPD at scale

- teacher weights live in centralized 3FS, ZeRO-sharded, loaded on demand

- cache last-layer teacher hidden states; rebuild full logits via the prediction head on the fly (avoids materializing 100k-vocab × N-teachers logits)

- order samples by teacher index → only one teacher head in GPU mem at a time

- specialized TileLang KL kernel

RL infra

- FP4 rollouts; preemptible & fault-tolerant rollout service with token-granular WAL

- DSec sandbox: container / microVM / fullVM, hundreds of thousands per cluster on 3FS

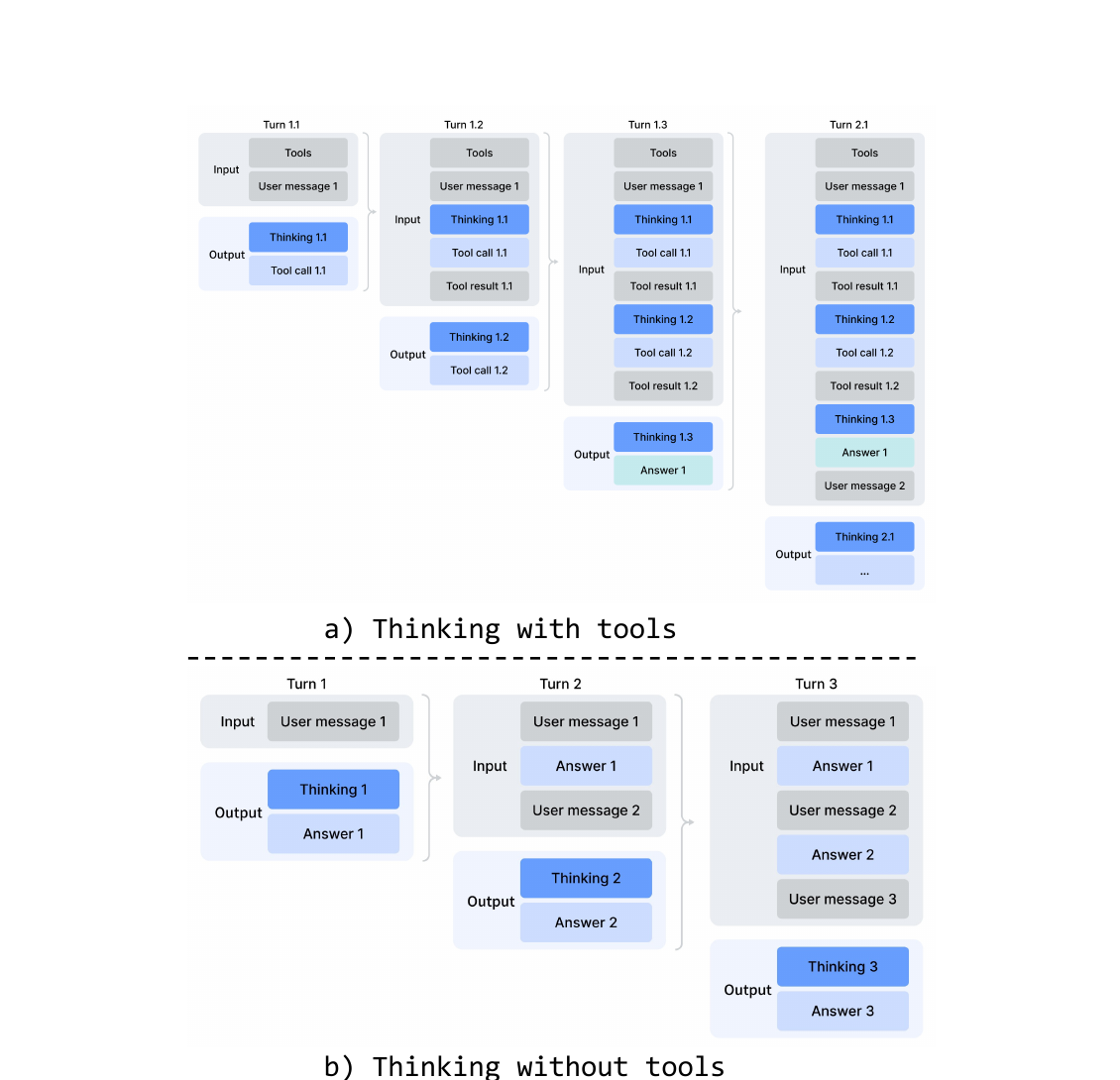

12 · reasoning modes & agent integration

three modes, one model

| mode | use | format |

|---|---|---|

| non-think | routine, low-risk | </think> summary |

| think-high | complex, planning | <think>…</think> summary |

| think-max | frontier reasoning | + injected "be thorough" prompt |

API-surface convergence with OpenAI's reasoning_effort

and Anthropic's budget_tokens for extended thinking.

tool-call schema

- new

<|DSML|>XML format → fewer escape errors than JSON tool calls - interleaved thinking: reasoning preserved across tool turns within an agentic task; flushed on new user message in chat

quick instruction

Special tokens that reuse the existing KV cache for auxiliary tasks — no separate small model, no re-prefill:

<|action|> need search? ·

<|query|> gen query ·

<|authority|> need authoritative source? ·

<|domain|> classify ·

<|extracted_url|> / <|read_url|> ·

<|title|> conv title

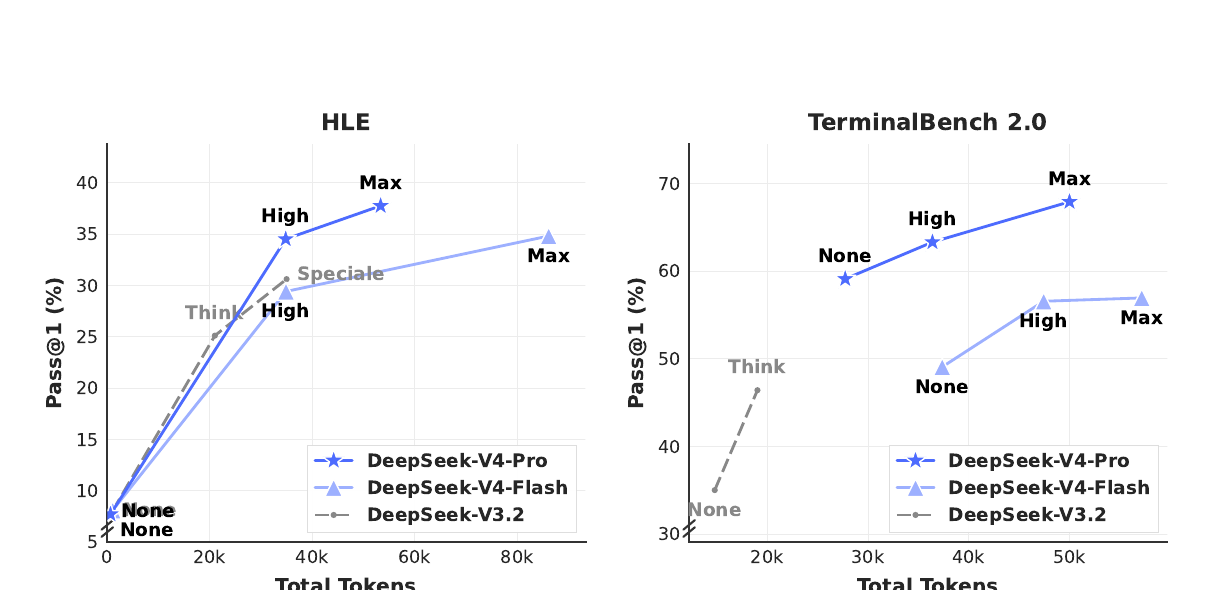

13 · headline post-trained results

V4-Pro-Max vs frontier closed/open models

| benchmark | Opus 4.6 Max | GPT-5.4 xHi | Gemini 3.1-Pro Hi | K2.6 | GLM-5.1 | DS-V4-Pro Max |

|---|---|---|---|---|---|---|

| MMLU-Pro | 89.1 | 87.5 | 91.0 | 87.1 | 86.0 | 87.5 |

| SimpleQA-verified | 46.2 | 45.3 | 75.6 | 36.9 | 38.1 | 57.9 |

| GPQA Diamond | 91.3 | 93.0 | 94.3 | 90.5 | 86.2 | 90.1 |

| HLE | 40.0 | 39.8 | 44.4 | 36.4 | 34.7 | 37.7 |

| LiveCodeBench | 88.8 | — | 91.7 | 89.6 | — | 93.5 |

| Codeforces (rating) | — | 3168 | 3052 | — | — | 3206 |

| Apex Shortlist | 85.9 | 78.1 | 89.1 | 75.5 | 72.4 | 90.2 |

| SWE-Verified | 80.8 | — | 80.6 | 80.2 | — | 80.6 |

| Terminal Bench 2.0 | 65.4 | 75.1 | 68.5 | 66.7 | 63.5 | 67.9 |

| BrowseComp | 83.7 | 82.7 | 85.9 | 83.2 | 79.3 | 83.4 |

| MRCR 1M | 92.9 | — | 76.3 | — | — | 83.5 |

SOTA among open models on knowledge. Lead on code/math (Codeforces 3206 ≈ rank-23 worldwide, LiveCodeBench, Apex). Trails Gemini-3.1-Pro on broad knowledge by ~3–6 months of progress.

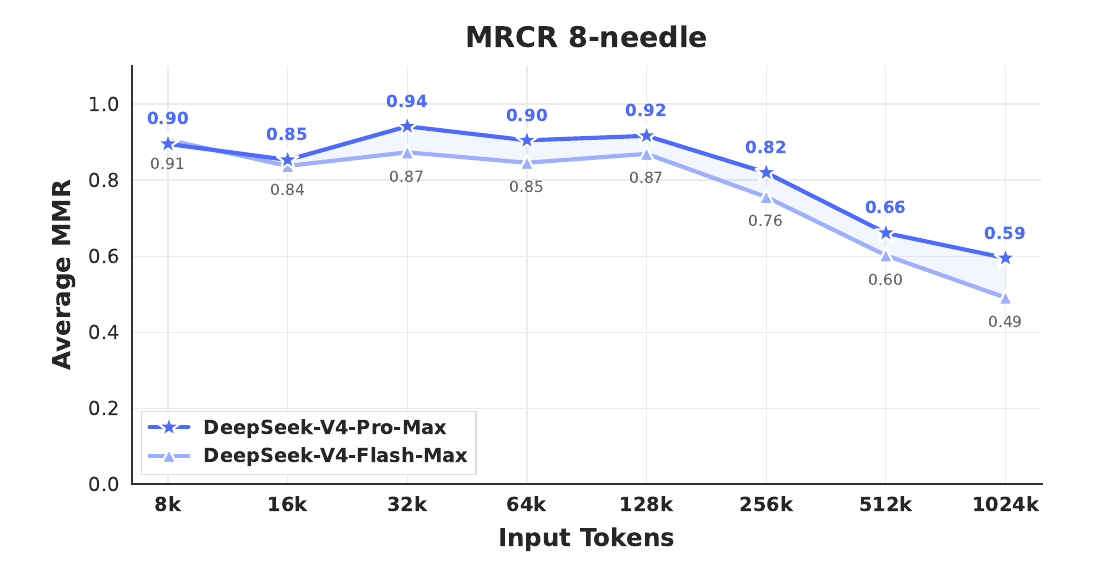

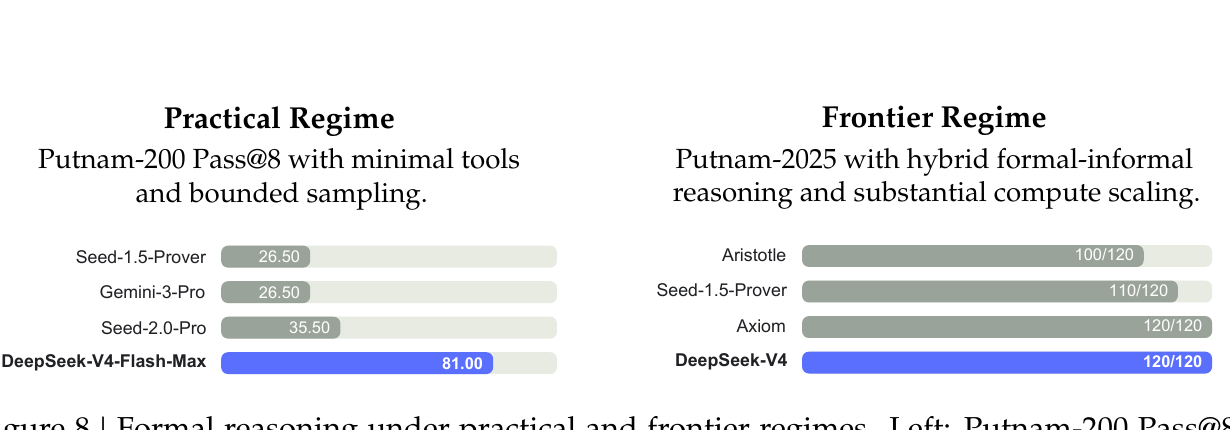

14 · long-context behavior

1M tokens isn't just declared, it works

where it actually pays off

- Codeforces 3206 (≈ rank 23 worldwide) — first open model on parity with frontier closed

- Putnam-2025 with hybrid formal+informal: 120/120 perfect under their pipeline

- think-max gives a real, monotonic lift on hard reasoning

- token efficiency on HLE better than V3.2 — same accuracy, fewer thinking tokens

15 · takeaways

what V4 actually contributes

- hybrid CSA / HCA — credible recipe for million-token attention at training-and-inference cost that fits open hardware. Two compression regimes interleaved is a genuinely new composition.

- mHC — first deployment of doubly-stochastic-projected residuals at trillion scale; turns hyper-connections from research promise into production-stable.

- Muon at 1.6T — the largest known win for the first credible AdamW challenger; with hybrid Newton-Schulz orthogonalization.

- OPD over mixed RL — pipeline-level innovation: train specialists separately, merge by behavioral distillation. Plus full-vocab logit distillation at scale.

- infra firsts: 1.92× EP overlap, FP4 indexer, batch-invariant deterministic train/infer parity.

where it sits

- open SOTA on knowledge, code, math; ~3–6 months behind GPT-5.4 / Gemini-3.1-Pro on hardest reasoning

- 1M context is real but quality drops past 256K — Opus still leads on retrieval at 1M

- FP4×FP8 has the same peak FLOPs as FP8×FP8 today; future hardware could give another 1/3 headroom

read next

- open inference reference:

huggingface.co/deepseek-ai/DeepSeek-V4-Pro - V3.2 paper for DSA baseline (arXiv:2512.02556)

- NSA (arXiv:2502.11089) — direct technical predecessor

- HC (arXiv:2409.19606), mHC (arXiv:2512.24880)

- Muon writeup (Jordan 2024); Liu+ 2025 (arXiv:2502.16982)

- OPD blog — Thinking Machines Lab, Oct 2025

appendix · full reference list

everything cited in this deck, grouped

attention & long context

- Vaswani+ 2017 — Transformer · 1706.03762

- Child+ 2019 — Sparse Transformers · 1904.10509

- Kitaev+ 2020 — Reformer · 2001.04451

- Beltagy+ 2020 — Longformer · 2004.05150

- Zaheer+ 2020 — BigBird · 2007.14062

- Wang+ 2020 — Linformer · 2006.04768

- Choromanski+ 2020 — Performer · 2009.14794

- Katharopoulos+ 2020 — Linear Transformers · 2006.16236

- Shazeer 2019 — MQA · 1911.02150

- Ainslie+ 2023 — GQA · 2305.13245

- Su+ 2021 — RoPE · 2104.09864

- Xiao+ 2024 — Attention Sinks · 2309.17453

- Peng+ 2023 — RWKV · 2305.13048

- Gu & Dao 2023 — Mamba · 2312.00752

- Dao & Gu 2024 — Mamba-2 · 2405.21060

- Jiang+ 2023 — Mistral 7B · 2310.06825

- Gemma 2 — Google 2024 · 2408.00118

- Gemini 1.5 — Google 2024 · 2403.05530

- MiniMax-01 — 2025 · 2501.08313

- Qwen2.5-1M — 2025 · 2501.15383

- Kimi K2 — 2025 · 2507.20534

- RWKV-7 Goose — 2025 · 2503.14456

DeepSeek series

- DeepSeekMoE 2024 · 2401.06066

- DeepSeek-V2 (MLA) · 2405.04434

- DeepSeek-V3 · 2412.19437

- DeepSeek-R1 · 2501.12948

- NSA 2025 · 2502.11089

- DeepSeek-V3.2 (DSA) · 2512.02556

residuals & stability

- He+ 2015 — ResNet · 1512.03385

- Huang+ 2016 — DenseNet · 1608.06993

- Birkhoff 1946 — Birkhoff polytope theorem

- Sinkhorn 1964 / Sinkhorn-Knopp 1967

- Cuturi 2013 — Sinkhorn distances · 1306.0895

- Miyato+ 2018 — Spectral Norm · 1802.05957

- Bachlechner+ 2020 — ReZero · 2003.04887

- Zhu+ 2024 — Hyper-Connections · 2409.19606

- Xie+ 2026 — mHC · 2512.24880

optimizer

- Schulz 1933 — Newton-Schulz iteration

- Loshchilov & Hutter 2017 — AdamW · 1711.05101

- Gupta+ 2018 — Shampoo · 1802.09568

- Jordan 2024 — Muon writeup · kellerjordan.github.io

- Bernstein & Newhouse 2024 — Modular Duality · 2410.21265

- Liu+ 2025 — Moonlight (Muon scalable) · 2502.16982

infrastructure

- Lepikhin+ 2020 — GShard · 2006.16668

- Fedus+ 2021 — Switch · 2101.03961

- Tillet+ 2021 — Triton · 2107.13042

- Hwang+ 2022 — TUTEL · 2206.03382

- Peng+ 2023 — FP8-LM · 2310.18313

- Zhang+ 2025 — COMET · 2502.19811

- Wang+ 2025 — TileLang · 2504.17577

- OCP MX Formats v1.0 — 2023 · opencompute.org

- Thinking Machines 2025 — Defeating Nondeterminism in LLM Inference

- 3FS — github.com/deepseek-ai/3FS

post-training & RL

- Hinton+ 2015 — KD · 1503.02531

- Schulman+ 2017 — PPO · 1707.06347

- Sanh+ 2019 — DistilBERT · 1910.01108

- Wortsman+ 2022 — Model Soups · 2203.05482

- Li+ 2022 — Branch-Train-Merge · 2208.03306

- Lightman+ 2023 — Let's Verify · 2305.20050

- Rafailov+ 2023 — DPO · 2305.18290

- Zheng+ 2023 — LLM-as-a-Judge · 2306.05685

- Gu+ 2023 — MiniLLM · 2306.08543

- Yuan+ 2024 — Self-Rewarding · 2401.10020

- Shao+ 2024 — GRPO/DeepSeekMath · 2402.03300

- Zhang+ 2024 — GenRM · 2408.15240

- Lu & TML 2025 — On-Policy Distillation

appendix · design system

this slide is live — every control mutates the deck's CSS variables and persists with save

colors — click swatch to pick

type ramp — affects every instance of the class

components — live preview using current tokens

raw overrides — what gets baked into <style id="theme-overrides"> on save

no overrides · matches base design system